AI customer support can now handle 80% of conversations remarkably well. Most platforms can handle FAQ-style questions, look up order statuses, and walk customers through standard processes. The best platforms can go much further, taking complex actions and resolving genuinely difficult situations.

But there's a gap that the industry doesn't like to discuss: what happens when AI can't handle a conversation?

The answer, for almost every AI support platform on the market, is: punt it to a third-party ticketing system. And this is where things fall apart. It’s the problem we built Resolution Loop to solve.

The anatomy of a broken escalation

Here's what a typical AI-to-human escalation looks like today:

Customer is mid-conversation with AI on WhatsApp

AI determines it needs human help

Conversation is copied into Zendesk (or Intercom, or Salesforce), etc.

A ticket is created in the external system

A human agent picks it up in that system

The customer may receive the response on a different channel (ex. email instead of WhatsApp)

The human resolves the issue. The customer may or may not be satisfied.

The AI learns nothing

Each new step introduces failure modes. Cross-system ID mapping breaks. Twilio-backed flows create double message ingestion. Conversation threads split across systems. The customer experiences a jarring context switch: they were chatting on WhatsApp, now they're getting emails.

And the biggest failure: the human resolution vanishes into the ticketing system. The AI never sees it. The same type of issue escalates again tomorrow, and the day after, and the day after that.

Why the handoff problem persists

The root cause is architectural. Most AI support platforms were built as a layer on top of existing ticketing systems. They integrate with Zendesk, Intercom, and Salesforce because that's where the humans already work.

This made sense when AI was handling 10-20% of conversations and humans handled the rest. But as AI handles 50%, 60%, 70% of conversations, the economics flip. Now you're maintaining an entire ticketing system infrastructure for a decreasing minority of conversations, and the integration between AI and human is the most complex, brittle part of the system.

It's like building a bridge where the middle section is held together with duct tape. The more traffic you put on it, the more the weakness matters.

A different architecture: humans inside the AI platform

At Lorikeet, we've taken a different approach. Instead of sending escalations to an external system, we brought humans into Lorikeet.

Resolution Loop is our native escalation management system. When the AI determines a conversation needs human help, the human works directly inside Lorikeet: same platform, same conversation thread, same customer context.

We've built two distinct modes for different situations:

Take Over: For conversations where a human needs to drive. The agent claims the ticket, sees the full conversation history, and responds directly to the customer through the same channel (WhatsApp, SMS). The AI observes, and after resolution, the system captures what the human did to inform future handling.

Steer: For conversations where the AI is almost there but needs expert input. The AI privately asks a human: "How should I handle this?" The human provides the answer ("here's how you calculate a refund for a partial return") and the AI continues the conversation using that guidance. Critically, that instruction is saved to the knowledge base permanently.

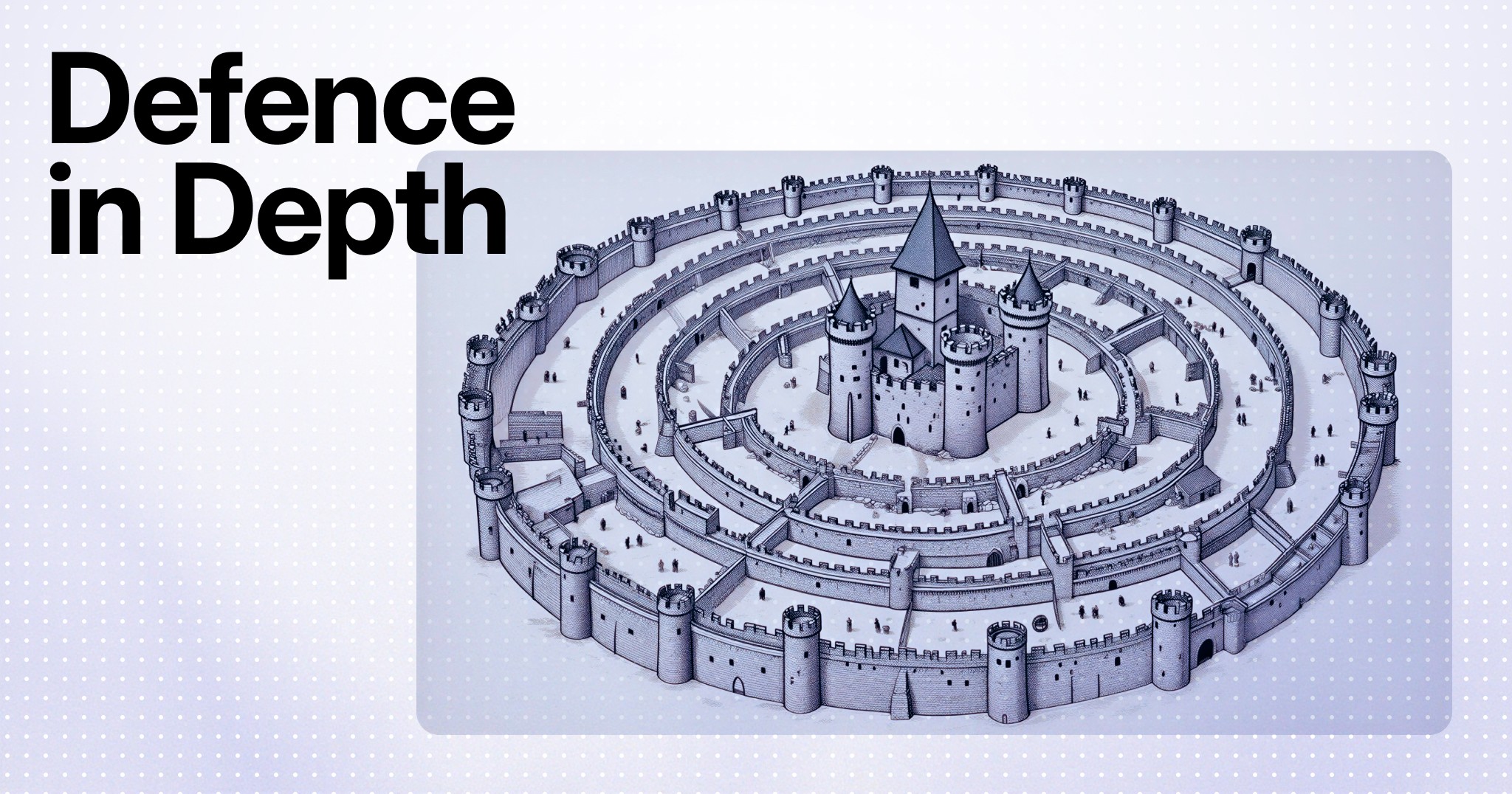

This builds on what we've described as defense in depth: a layered approach to AI accuracy where each system assumes the others will occasionally fail. The base agent, simulations, runtime guardrails, and post-ticket QA each catch different failure modes. Resolution Loop is the layer beneath all four. When the automated defense can't resolve a conversation, a human steps in, inside the same platform and the same conversation thread. And what they do feeds back into every automated layer: knowledge base updates, new simulation scenarios, refined QA benchmarks. The flywheel gets stronger with every escalation.

The difference between these modes matters. Take Over is what you'd expect from any escalation system. Steer is what changes the economics of support.

The learning loop: why escalation rates should decline

The core insight behind Steer is that most escalations happen for a finite set of reasons. A customer has an edge case the AI wasn't trained on. A policy changed and the knowledge base wasn't updated. A workflow doesn't cover a specific scenario.

When a human provides a Steer instruction, ("this is how you handle refunds for customers who paid with store credit") that answer gets saved to the knowledge base. The next time the same situation arises, the AI handles it autonomously. No escalation needed.

Over time, this creates a compounding effect. Each week, the AI handles situations that would have been escalations the week before. The escalation rate naturally declines, not because you're suppressing escalations, but because you're eliminating the knowledge gaps that cause them.

Our north star metric: does the escalation rate go down over time because the AI is learning from human resolutions?

This is fundamentally different from "self-training AI" approaches where models learn from their own outputs (a feedback loop that degrades quality over time). Here, the AI learns from verified human expertise, the same way you'd train a new team member.

The result is a system where escalation isn't a cost center. It's the mechanism that makes every other layer of the defense stack more reliable. Each human resolution is a data point that tightens guardrails, generates simulation scenarios, and raises QA benchmarks. The best escalation system is one that makes itself progressively unnecessary.

Starting with WhatsApp and SMS

We deliberately launched Resolution Loop for asynchronous messaging channels first. Three reasons:

Conversations already live in Lorikeet. For WhatsApp and SMS, messages flow through Lorikeet directly. There's no round-trip to an external system, which means adding human responses to the same conversation is architecturally clean.

Async removes the live-chat juggler problem. Managing real-time chat queues is operationally complex: wait times, capacity planning, agent presence. Async channels let humans respond thoughtfully without the pressure of a live queue.

The pain is sharpest here. When a subscriber uses Zendesk for email but Lorikeet for WhatsApp, escalations from WhatsApp to Zendesk create double message ingestion and broken thread continuity.

Keeping everything in Lorikeet eliminates this entirely.

Chat and email modes will follow, along with enhanced capabilities like round-robin assignment, macros, SLA tracking, and deeper Coach integration.

What this means for the support stack

Resolution Loop introduces a middle state between "AI handles it" and "human handles it": AI handles it with help. This expands the addressable automation surface significantly. Conversations that were previously binary escalations (AI fails, human takes over) can now be collaborative (AI asks, human answers, AI continues).

For support teams running Lorikeet alongside a ticketing system today, this is the beginning of consolidation. When humans can work inside Lorikeet for the conversations that need them, the question becomes: do you still need that ticketing system seat?

For regulated and complex businesses, this architecture matters even more. Every escalation is observable in real time with compliance guardrails built in: who handled it, what was said, how it was resolved. The full audit trail lives in one platform, and every human intervention feeds back into the accuracy stack. The depth of this system is what makes Lorikeet purpose-built for industries where getting it wrong has consequences.

Questions to ask your vendor

If you're evaluating AI support platforms, here's what to ask about escalation:

Where do escalated conversations live? If the answer is "your existing ticketing system," ask about thread continuity and context preservation across the handoff.

Does the AI learn from human resolutions? Not "does it track that an escalation happened" but: does the actual content of what the human did to resolve the issue feed back into the AI's knowledge base?

Can a human guide the AI without taking over? The difference between "take over" and "steer" is the difference between replacing the AI and teaching it. Only one of those compounds.

What happens to your escalation rate over time? If the architecture doesn't have a path to learning from human resolutions, escalation becomes a permanent cost center instead of a shrinking one.

Is escalation channel-native? If a customer is on WhatsApp, does the human respond on WhatsApp, or does the response arrive via email?

Do you get real-time observability with compliance guardrails? For regulated industries, every escalation needs a full audit trail: who handled it, what was said, how it was resolved. If that requires a separate monitoring layer, ask why.

Resolution Loop is available today for Lorikeet subscribers using SMS and WhatsApp channels. Contact us to learn more →