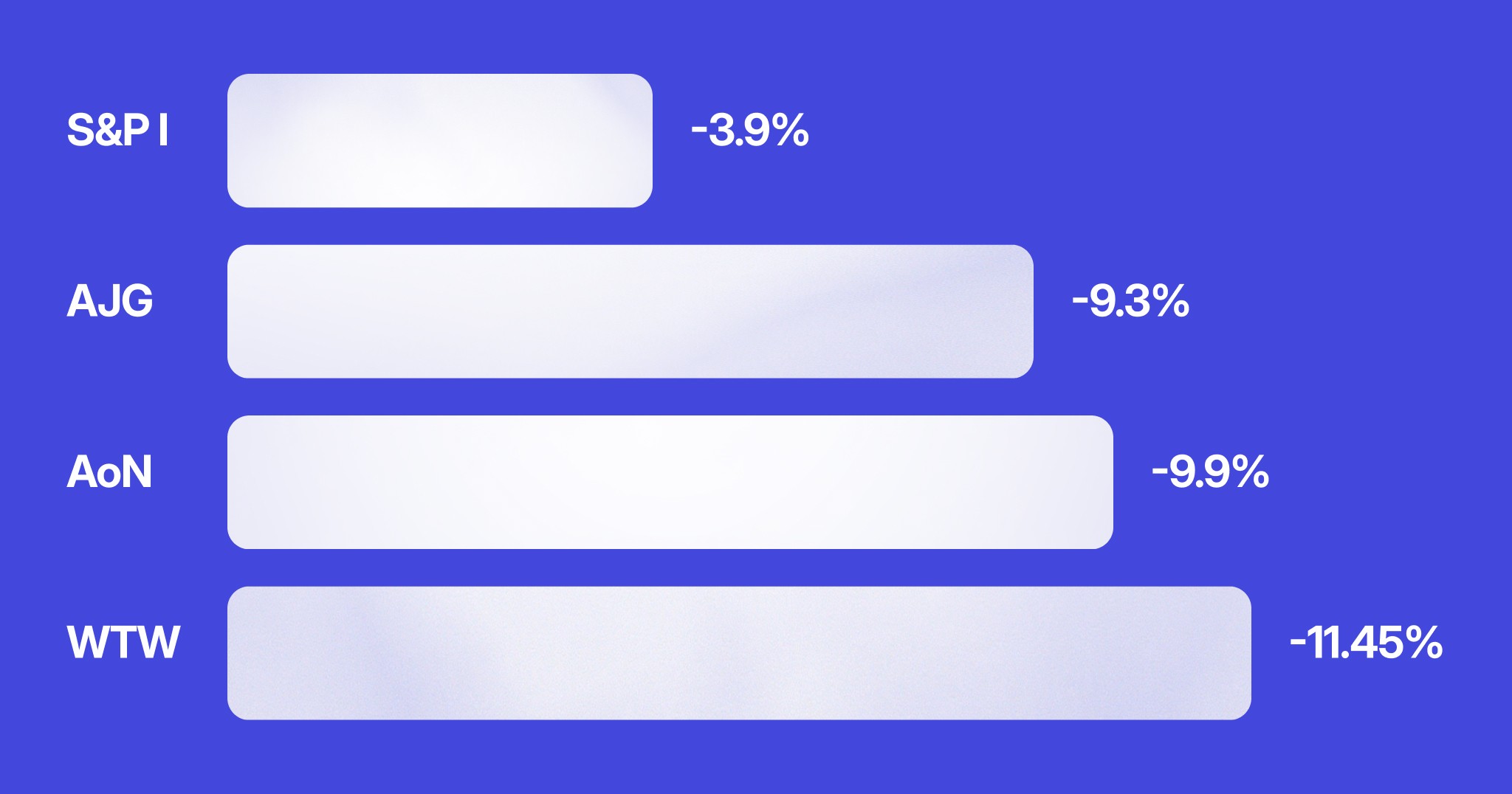

On February 9th, shares in Willis Towers Watson fell 11.45%. Aon dropped 9.9%. Arthur J. Gallagher, 9.3%. Not bad earnings. Not a lawsuit. Not a scandal. OpenAI had approved an insurance app.

The app was called Tuio. Users could get a personalised home insurance quote inside ChatGPT without ever visiting an insurer's website. The market understood immediately what this meant: if consumers can get insurance through a conversation with a generalist AI, they might not need a broker at all.

This is what disintermediation looks like in practice. It wasn't a gradual years-long erosion, just a regular Tuesday.

At Insurtech Live Australia in February, Lorikeet CEO Steve Hind made a point that ended up being cited by multiple other speakers across multiple panels that same day. The CTO of Blue Zebra Insurance built a separate argument off it. The Open Insurance panel referenced it. The moderator of a later session called back to "the idea from this morning," without attribution.

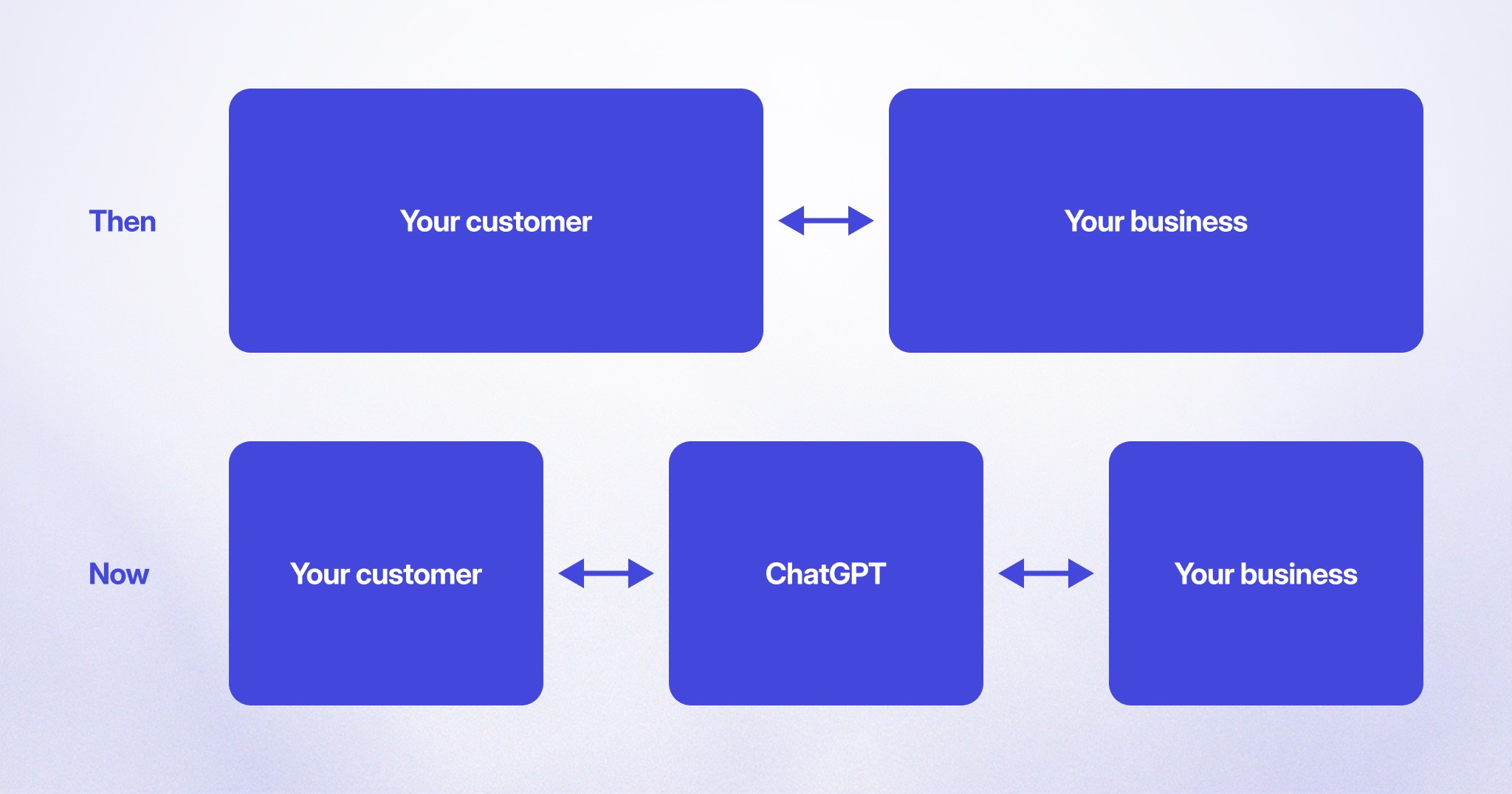

The idea: companies in regulated industries that don't provide a high-quality, first-party AI concierge will be permanently disintermediated from their customer relationships by generalist AI.

The reason it resonated wasn't novelty, it was inevitability. Consumer adoption of tools like ChatGPT has already reset what people expect from digital services. They've experienced what it's like to get an instant, thoughtful, conversational answer to a complex question. Now they wonder why their bank makes them navigate a phone tree. The baseline has shifted, and there's no shifting it back.

The failure mode that doesn't get talked about enough is the regulatory trap.

Executives at regulated companies – insurers, banks, healthcare providers – look at AI deployment and say: "we'd love to, but we can't give advice." They're worried about regulations around financial advice, clinical negligence, insurance licensing. So they hold back. They wait for the rules to settle. They treat caution as a form of customer protection.

Meanwhile, their customers aren't waiting. They're asking ChatGPT.

In October 2025, OpenAI updated its usage policies to explicitly prohibit ChatGPT from providing "personalized professional advice" in medical, legal, and financial domains. This was widely reported as OpenAI tightening up its safety posture. It wasn't. Multiple analyses noted that ChatGPT continues to provide substantial assistance in these areas when prompted – the policy is a legal disclaimer, not a technical block. Bloomberg Law was direct about it: the ToS update functions primarily as a "liability shield" to make it harder for users to pursue claims when the advice is wrong.

So here's the actual situation. You're holding back AI deployment to protect your customers from bad AI advice. OpenAI is offering that same advice, disclaimer attached, with no liability and no accountability when it's wrong – while simultaneously recruiting your most recognisable competitors as data partners inside ChatGPT Health.

If you don't deploy AI because you're worried about financial advice regulations, your customers will just get fully unregulated financial advice from ChatGPT. What you thought was protection is actually surrender.

Weight Watchers is the case study worth telling in full.

Before filing for Chapter 11 bankruptcy in May 2025, Weight Watchers had already deployed a customer-facing AI agent – built by Sierra, the Bret Taylor startup – that handled roughly 70% of all customer service sessions without human involvement. Customer satisfaction was 4.6 out of 5. Members were reportedly exchanging heart emojis with the AI.

By any deflection metric, it was working. But it wasn't doing what members actually needed. It was handling inbound contacts about existing problems. It wasn't proactively managing the weight loss journey. It wasn't connected to health data. It wasn't taking actions that changed anything for the customer. It was, in the language that most support AI is sold in, very good at resolving queries.

Then ChatGPT Health launched, with Weight Watchers named as one of the early partners. The pitch: WW would provide personalised GLP-1 meal planning through ChatGPT conversations. The company got distribution. OpenAI got the customer relationship.

Weight Watchers had deployed AI and still ended up disintermediated. Because there's a difference between having AI in support and having AI that gives customers a reason to stay in your ecosystem. A deflection chatbot doesn't create the latter. Only an AI that actually does things – that accesses your systems, takes actions on behalf of customers, produces outcomes they couldn't get elsewhere – gives them a reason to keep coming back to you rather than to whichever generalist platform sits in front of them.

Jamie Hall, Lorikeet's co-founder, spent years at Google Brain working on LaMDA – conversational AI that could discuss anything beautifully. His reflection on what that work taught him: "beautiful conversation doesn't refill medications or reschedule appointments." That's the gap that matters.

Here's the thing about ChatGPT that makes this more complicated than it first appears: it can't actually do the job in regulated domains. Not really.

In February 2026, a University of Oxford study published in Nature Medicine tested over a million prompts across leading AI models. Chatbots identified the correct medical condition in roughly 33% of real-world cases, and chose the correct course of action less than 44% of the time. The models also confidently repeated demonstrably false medical claims when they were phrased in credible-sounding language.

In insurance, something even more immediate is already happening. AI browsers are being used today to fill out insurance applications on behalf of consumers – automating the form, the responses, the submission. If a customer's AI agent hallucinates an answer ("no, my car has no existing damage"), and they later make a claim that contradicts the application, they may receive nothing. The consumer bears the cost of the AI's mistake. The insurer bears the reputational one.

One wrong word in a financial services policy document recently cost a major insurer a nine-figure FCA fine. The liability math for AI-generated advice in regulated industries is not abstract.

Which brings the argument full circle. You can't protect your customers by refusing to deploy AI – they'll just get worse AI elsewhere. And you can't protect them by pointing them at ChatGPT – it won't be accountable when it's wrong, and it can't take the actions that make the advice worth anything anyway.

The only answer that actually works is to build something better than ChatGPT for your specific domain.

"Better than ChatGPT" is a specific bar, not a vague one.

It doesn't mean more conversational – ChatGPT has that comprehensively covered. It means connected to your systems, constrained by your rules, accountable through your processes, and capable of taking actions that actually change a customer's situation. The concierge model that's been a luxury product for wealthy individuals – someone who knows the systems, makes the calls, navigates the complexity on your behalf – is becoming accessible to every customer. The companies that capture that relationship for their customers will have a moat. The ones that cede it to a generalist platform will have a contact centre.

The framing that crystallised it internally came from a conversation about what health-tech companies in the GLP-1 space are actually at risk of losing. If the AI experience inside a branded app isn't meaningfully better than what a customer can get from ChatGPT for free, there's no reason to stay in that ecosystem. They get their health advice from ChatGPT and buy their medication from whichever pharmacy is cheapest. The customer relationship – and everything that flows from it: recurring revenue, data, loyalty, advocacy – is gone.

The winning first-party AI doesn't just answer questions. It refills the prescription. It reschedules the appointment. It checks the policy and tells you what you're actually covered for. It does the things that keep you in the loop, and keeps the customer relationship with you rather than with whoever sits in front of you.

Questions worth asking yourself

The Tuio moment – one app approval, billions in market cap erased in a single session – suggests the window for getting this right is shorter than it might look from inside an annual planning cycle. So, a few things worth testing:

What does a customer find when they go to ChatGPT and ask about your product or service? Have you actually run that conversation and seen what it says?

Does your AI handle contacts, or does it take actions? If it's the former, what's the specific thing a customer needs that you're not giving them – and who's getting credit for providing it instead?

If you're holding back on deployment for regulatory reasons, what's your model for the customers who won't wait? Where are they going, and what are they being told when they get there?

And the hardest one: if a generalist AI platform became the primary interface between your customers and your product, what does your business look like in five years?

Companies that treat these as hypothetical tend to find them becoming very concrete on a Tuesday in February, when the stock market decides the answer for them.

Book a call

See what Lorikeet is capable of