How fintech sales objections reveal which AI support vendors were built for regulated industries, and which ones are pretending.

Three months into a security review, the contract is ready, the demo was impressive, but the AI support vendor still can't confirm where customer conversation data is stored, or whether it stays in a jurisdiction that satisfies the company's regulatory obligations. The vendor's response: "We're working on it."

This is an architecture problem, and it's the single most common experience fintech CX leaders have when evaluating AI support vendors. Every fintech raises it, and the question is why.

Three months in a security review

Most AI support products were designed for e-commerce. High-volume, low-stakes, FAQ-heavy. They work brilliantly there. "Where's my order?" is a solved problem.

But the ticket that matters in fintech is not "where's my order" - it's "why was my transfer blocked," "I need to dispute this charge before my rent bounces," or "my account has been frozen and I can't access my funds." These tickets touch regulated systems. They require audit trails and they carry real financial consequences for the customer.

When a vendor gets stuck in your security review for three months, that tells you something about the product's bones. The data residency controls, the SOC 2 processing integrity evidence, the compliance documentation - these things either exist in the architecture or they don't. You cannot retrofit data sovereignty into a product that was built assuming it doesn't matter.

The same pattern shows up in integration conversations. Fintech CX leaders consistently report that vendors underestimate the engineering lift required to connect to regulated systems: fraud detection logic that's too nuanced to codify as simple rules, KYC verification workflows, transaction monitoring systems. The vendor's demo looks clean, but the reality involves engineering teams that are already stretched and CS leaders who can't override their security team's veto - nor should they.

None of this is unreasonable on the fintech's part, it's the vendor revealing that their product was built for a different customer.

The latency tax on financial anxiety

Latency matters differently in financial services.

A 30-second wait while someone checks their order status is mildly annoying. A 30-second wait while someone is trying to figure out why their card was just declined at a restaurant is something else entirely. Abandonment in financial support correlates with anxiety, not patience. Every second of silence signals to the customer that the system cannot help them.

Most AI support vendors publish latency benchmarks from their best-case scenario: a simple FAQ lookup. The relevant benchmark for fintech is latency on a ticket that requires pulling account data, checking transaction history, verifying a dispute against merchant records, and making a decision. Those are very different numbers.

Voice makes this even more acute. When a customer calls about a frozen account and there's a multi-second delay before each response, it doesn't feel like talking to a support agent. It feels like talking to a system that's struggling. The customer's next move is predictable: "Can I speak to a person?"

P50 latency is not the metric that matters here. P90 is. The customers who experience your worst latency are disproportionately the ones in the most stressful situations - a declined mortgage payment, a blocked international transfer, a compromised account - the ones whose loyalty is most at stake.

Guardrails for financial consequences

Fintechs need AI that knows what it cannot do.

Monthly compliance testing before go-live, mandatory human escalation for financial hardship and distress, regulatory caution so strict that any agentic action near financial advice triggers an immediate handoff. These are not edge cases in fintech, they are quite literally the operating environment.

The absence of pre-built financial guardrails in most AI support products reveals their heritage. A product designed for a world where the worst outcome is a wrong shipping estimate won't have the machinery to prevent the worst outcome in fintech: giving a customer incorrect information about their money, a wrong answer about a hardship process or an incorrect dispute outcome.

RAG inconsistency is a symptom of the same problem. When your knowledge base contains regulatory guidance - and in fintech, it always does - "mostly accurate" is not an acceptable specification. A customer who receives incorrect information about a hardship process, a complaint pathway, or their rights under consumer protection law is not just poorly served. They are potentially harmed and you are potentially liable.

The fintech CX leaders who push hardest on guardrails are telling you what the product needs to do. Configurable compliance testing, topic-level escalation rules for transactions, disputes, hardship, and account access - these are the minimum viable product for regulated support.

Pricing tells you what the vendor believes

Fintechs consistently push for usage-based pricing, short pilots, and per-resolution models. Vendors consistently push for 12-month commitments and upfront contracts; both sides think the other is being difficult.

A vendor who insists on an annual commitment before you've seen results is pricing around their retention risk, not your value. They know that once the contract is signed, switching costs will keep you locked in regardless of performance. A vendor who charges per resolution is making a different bet: that their product will actually resolve things - transaction disputes, account queries, payment failures, KYC verification support - not just deflect them to your queue.

The pricing structure tells you what the vendor believes about their own product.

When a CX leader asks for a 60-day pilot with success criteria instead of a 12-month lock-in, that's someone correctly identifying that the vendor's confidence should be legible in the deal structure. Short pilots are not a barrier to adoption, they are a quality filter.

The "safe" choice

When a fintech CX leader defaults to the market leader - the name their board won't question, the vendor with the most logos on their website - they are making a career-risk decision, not a product decision. That's rational, but carries its own risk.

The market leader built their product for the broadest possible market. The regulated, high-stakes cases that define fintech support - disputed transactions, blocked transfers, hardship applications, frozen accounts - are not the use cases that shaped their architecture. Their product was optimized for the tickets that are easiest to automate, not the ones that matter most to your customers.

The relevant question is not "who is the safest vendor?" It's safe for whom? Safe for the buyer's career is not the same as safe for the customer whose hardship case gets hallucinated guidance. Safe for the procurement committee is not the same as safe for the compliance team that has to explain the audit trail, or the lack of one.

The vendor with the biggest brand has the least incentive to solve your hardest problems. They have enough easy problems to keep their metrics looking good.

The spec nobody wrote down

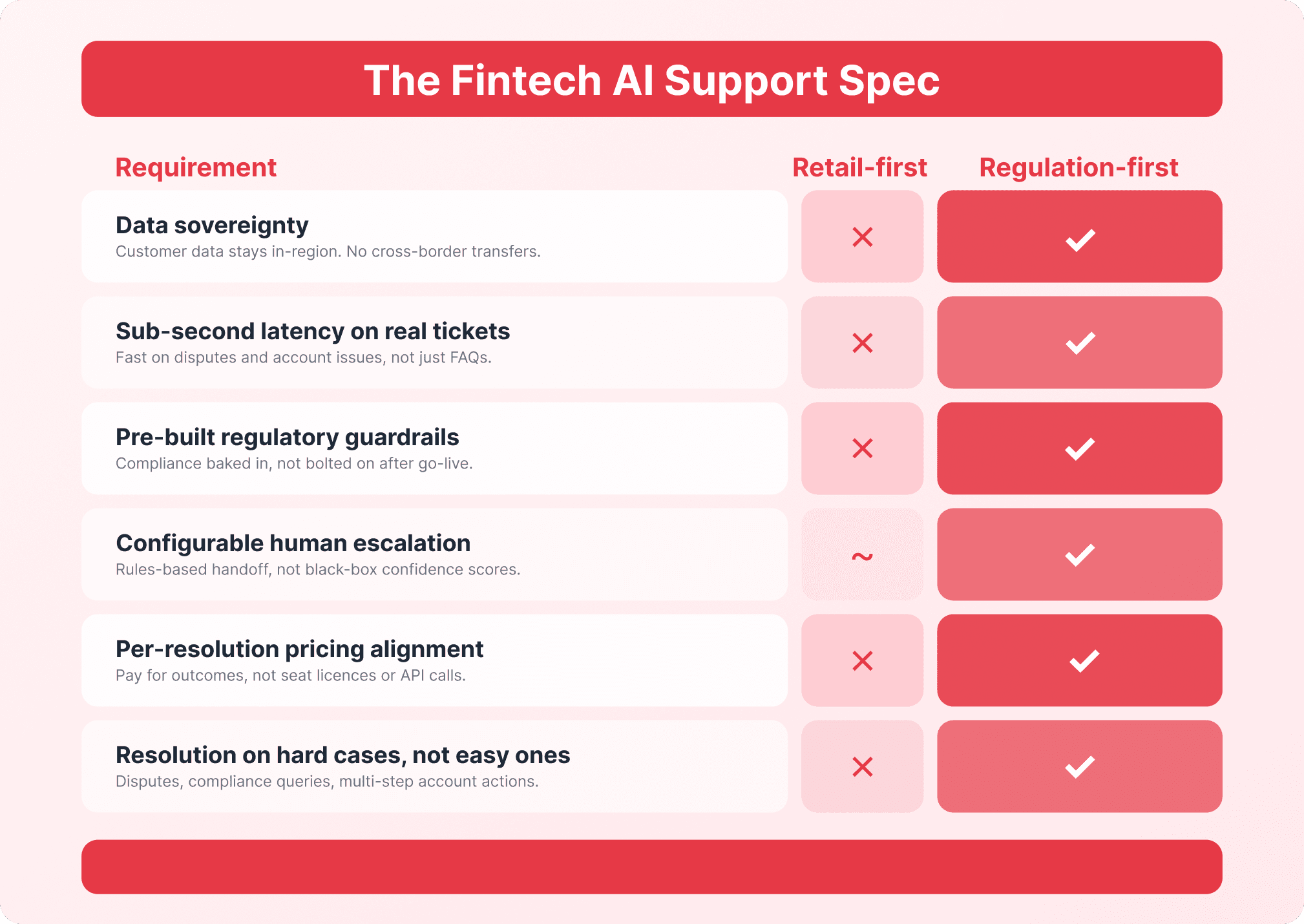

Every objection a fintech CX leader raises during an AI support evaluation is a line item in a spec that nobody has written down. Data sovereignty; sub-second latency on real tickets - transaction disputes, account queries, payment failures - not FAQ lookups; pre-built regulatory guardrails for financial hardship, complaints, and advice boundaries; configurable human escalation for distress and vulnerability; pricing that aligns the vendor's incentives with yours; and resolution rates on the hard cases, not just the easy ones.

The vendors who meet this spec didn't retrofit it, they started there. They chose regulated industries deliberately, not because it was the fastest path to market, but because the hardest problems are the most defensible ones to solve.

At Lorikeet, this is the product we built. Per-resolution pricing, because we should only get paid when the customer's problem is actually solved; configurable guardrails with auditable performance, because "trust us" is not a compliance strategy; architecture designed for data sovereignty from day one, not bolted on after losing a deal; and a focus on the hardest 20% of tickets - transaction disputes, transfer blocks, hardship processes, account access issues - because that's where the value is for fintech customers.

The question for fintech CX leaders evaluating AI support is not whether AI works for regulated industries - it does. The question is whether the vendor you're evaluating was built for the problem you actually have.

Book a call

See what Lorikeet is capable of