Last month we ran a survey on 1,083 consumers across eight countries. The first question was simple: when something goes wrong, would you rather have your problem handled by a human or by AI?

Seven percent picked AI.

If you stop reading there, the takeaway is the one every investor in AI customer service wishes weren't true; people prefer humans. The second question changes things a bit though.

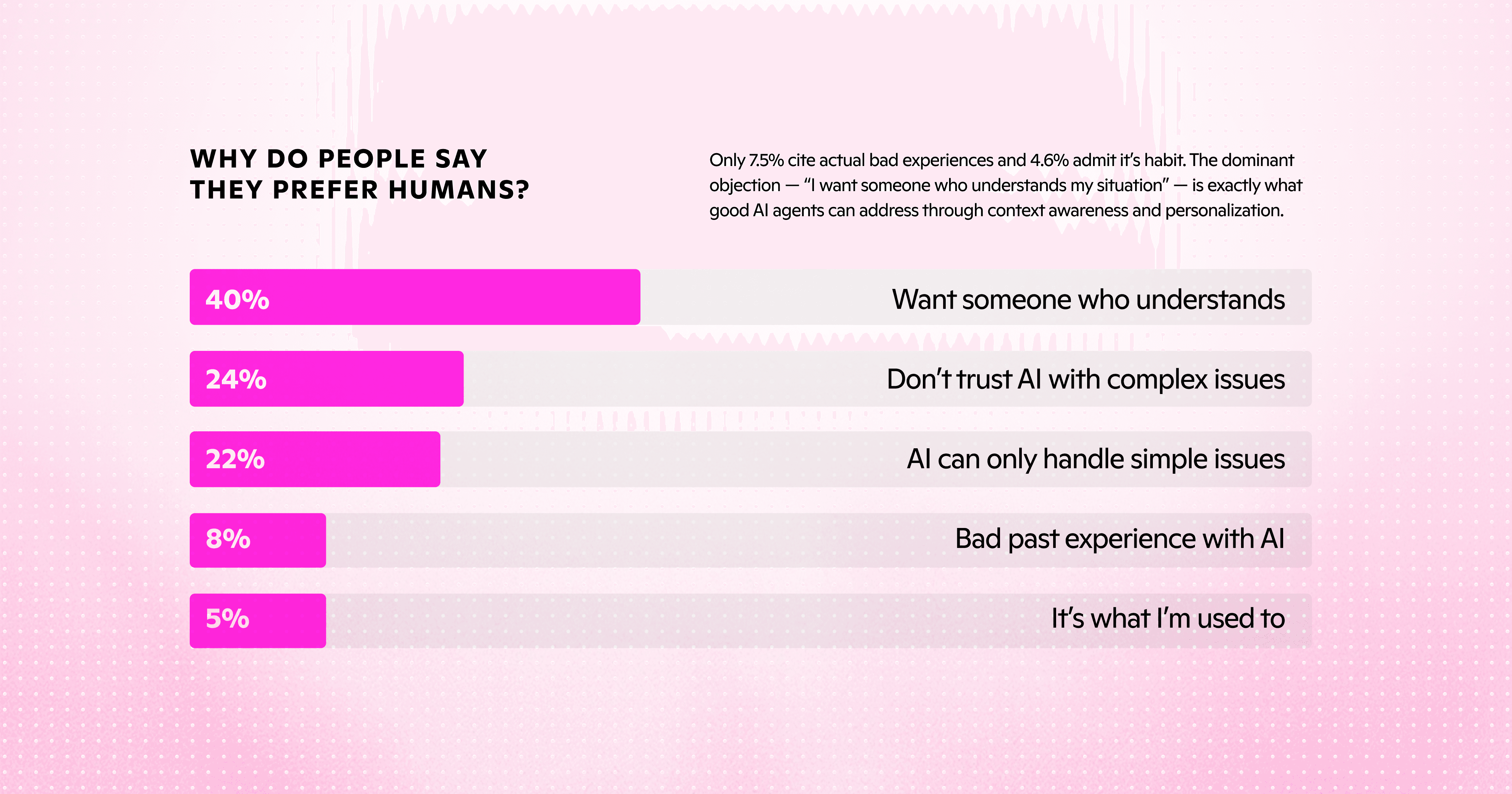

The reasons

We asked the people who said they preferred humans to tell us why. Forty percent want someone who understands their situation. Twenty-four percent don't trust AI with complex issues. Twenty-two percent think AI can only handle simple things. Eight percent had a bad past experience. Five percent said it was just habit.

None of those reasons is "I prefer the experience of being talked to by a person." Eighty-six percent of the stated preference for humans collapses to the same underlying claim: I want someone (or something) that can actually solve my problem, that knows who I am, and that I can trust to handle complexity. That sounds a lot more like a functional brief.

A context-aware AI agent that has read the customer's history, understands the policy, and can take action is built to meet that brief. The forty percent who want "someone who understands my situation" are not voting against AI, they're voting against being treated as a stranger.

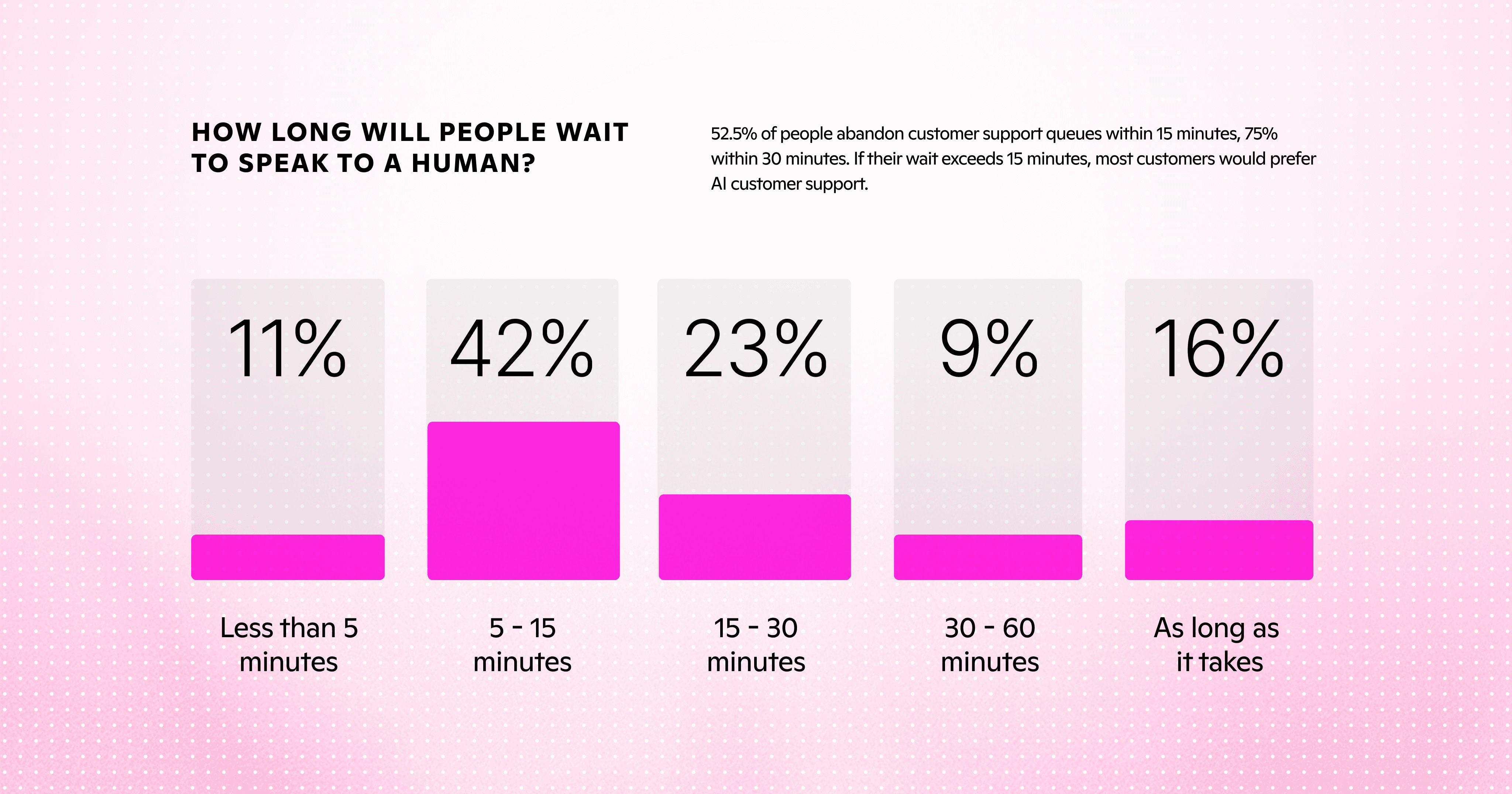

The wait

We then asked how long people would wait for a human before they'd switch to AI.

Eleven percent wouldn't wait more than five minutes. Forty-two percent will wait between five and fifteen. Twenty-three percent will wait up to half an hour. Sixteen percent said they would wait as long as it takes.

Then we ran the original preference question again, this time with a wait time on the human side. AI preference jumped from seven percent to forty-four. Twenty-four points moved directly from team-human to team-AI the moment time entered the equation. Half of the human-preference cohort switches to AI inside fifteen minutes. A 30 minute wait and over three quarters switch.

This is a structural constraint that human-only teams can't solve at scale; there aren't enough humans in any contact center to answer 1.4 billion calls in fifteen minutes each. Stated preference for humans is conditional on a service level the industry has never been able to deliver.

The privacy flip

We also asked which channel respondents preferred for sensitive personal topics, the kind of issues most leaders assume require warmth: health, money, security. AI preference for these jumps from seven percent to twenty-one, three times the baseline. Forty percent say they don't care either way. Sixty-one percent of consumers either prefer AI for sensitive topics or are indifferent.

This is the part of the data the industry talks about least. AI doesn't judge, doesn't gossip, and treats your data the same way every time. For the topics where customers feel most exposed, those properties matter more than warmth.

For the verticals we were built for (fintech, healthcare, insurance), the general-population baseline understates AI affinity for our buyers' customers. Eucalyptus, an Australian telehealth subscriber, deployed AI agents on the most sensitive end of their business: weight management, men's health, reproductive care. They handled three times their pre-AI ticket load with CSAT up ten points. The general-population baseline understated AI affinity for their customers, and they're not unusual.

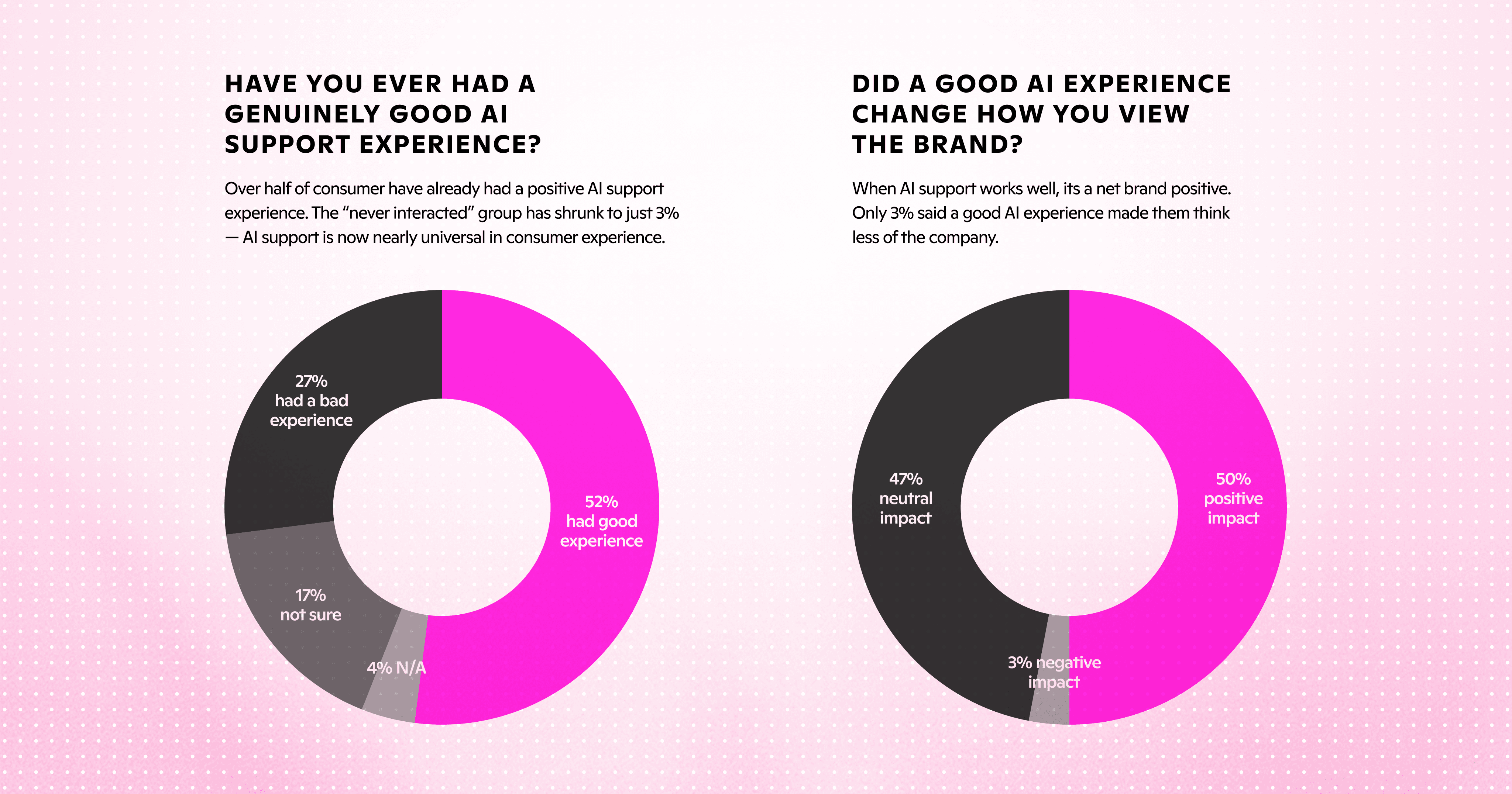

The bad chatbot debt

There's a depressing read of the headline number that's also the most useful read. Of the people in the survey who said they'd had any AI customer service experience, twenty-seven percent described it as bad. Only eight percent of the human-preference cohort cited an actual bad experience as their reason for preferring humans. Most of the stated preference tracks the chatbot wave of 2018 to 2023, when AI customer service meant a slow, brittle FAQ bot that bounced everything hard to a human queue. It was hands down a terrible experience. These stats tracks the experience customers had then more than the capability of agents now.

That reputational debt will pay down as customers run into more agents that actually solve problems, but it won't pay down on its own.

What changes when you read past the headline

People don't reject AI in customer service the way the headline number suggests. The thing customers reject is AI that doesn't solve their problem, and the survey makes that clear: eighty-six percent of the reasons given for preferring humans are reasons modern context-aware agents can meet today.

The stated preference is also conditional. Half of the human-preference cohort switches to AI inside fifteen minutes of waiting. The structural advantage AI has in this category is availability, and most operators have under-deployed against it.

And sensitive topics flip the polarity. The verticals where the industry is most cautious about AI deployment are the verticals where customers most want it.

The question to ask is whether the AI you're shipping actually solves your customers' problems. Whether customers accept AI is downstream of that. The seven percent is a starting point for companies whose AI does the work. The companies whose AI summarizes help articles will spend the next decade explaining why their customers haven't graduated past it.

The full report is at lorikeetcx.ai/content/consumer-attitudes-toward-ai-cs-report.

Book a call

See what Lorikeet is capable of