A fintech customer calls about a failed deposit. The agent checks transaction status, verifies account ownership, looks up fraud signals, reviews previous tickets, checks policy exceptions, confirms identity, and initiates the refund. Seven API calls, conditional logic throughout, business rules that vary by customer segment. The customer just sees a conversation. This is what we built and here’s how we did it.

Where we started

In 2024, we published a technical deep dive on Intelligent Graph, our architecture for deploying AI agents in complex support environments. At the time, pure agentic AI (giving an LLM instructions and letting it handle conversations however it likes) wasn’t ready for production. The failure modes were real: hallucinations, distraction, instructions degrading after half a dozen constraints.

The industry was learning this the hard way. Air Canada’s chatbot invented a refund policy and cost the airline real money in court. Sierra, one of the best-funded players in this space, has had public incidents where agents went off-script in ways that made customers uncomfortable. When you’re handling money or personal data, usually works isn’t good enough.

Intelligent Graph took a different approach. Structured workflows orchestrated everything. LLMs handled focused tasks inside: classify this intent, extract this value, generate a response from these specific talking points. The orchestration layer controlled the conversation, not the AI.

It worked. Hallucination rates dropped. Compliance teams could audit every decision. We could handle the conditional, stateful logic that pure chatbots couldn’t touch. And we built deep expertise in structured workflow design for regulated industries.

Models got better. The failure modes that made pure agentic approaches risky in 2024 decreased significantly. We saw an opportunity: what if we could combine the conversational flexibility of agentic AI with the guarantees we’d built into Intelligent Graph?

The key insight was that these weren’t competing approaches. They were complementary, operating at different layers.

Where we’re going

The evolved architecture puts a natural language agent at the top. It handles the conversation: tangents, clarifications, context switches. A customer in a refund flow asks “can I get store credit instead?” The agent can discuss that and continue without breaking anything.

But when the conversation reaches a critical operation (processing the refund, verifying identity, closing an account) the agent calls a structured workflow as a tool. Inside that tool, everything is deterministic. This is where Intelligent Graph lives now.

We call this Pockets of Determinism: agentic orchestration wrapping deterministic operations.

The logic is straightforward. An AI agent can trigger any tool at any time. That’s what makes it conversational. But we can make the tools themselves foolproof, so even if the agent triggers them incorrectly, nothing bad happens.

Think of a banking app. A child can tap “Close Account.” The button is always there, but the app checks for remaining balances before executing. The interface is permissive, the operation is strict.

As Jamie Hall, our CTO, explains:

“The agent can call the refund tool whenever it wants. But inside the tool, we check: has the agent confirmed with the customer? Have we disclosed the terms? If not, the tool returns ‘conditions not met’ and the agent goes back to do the work. Even if the agent hallucinates that conditions are met, the tool knows they’re not.”

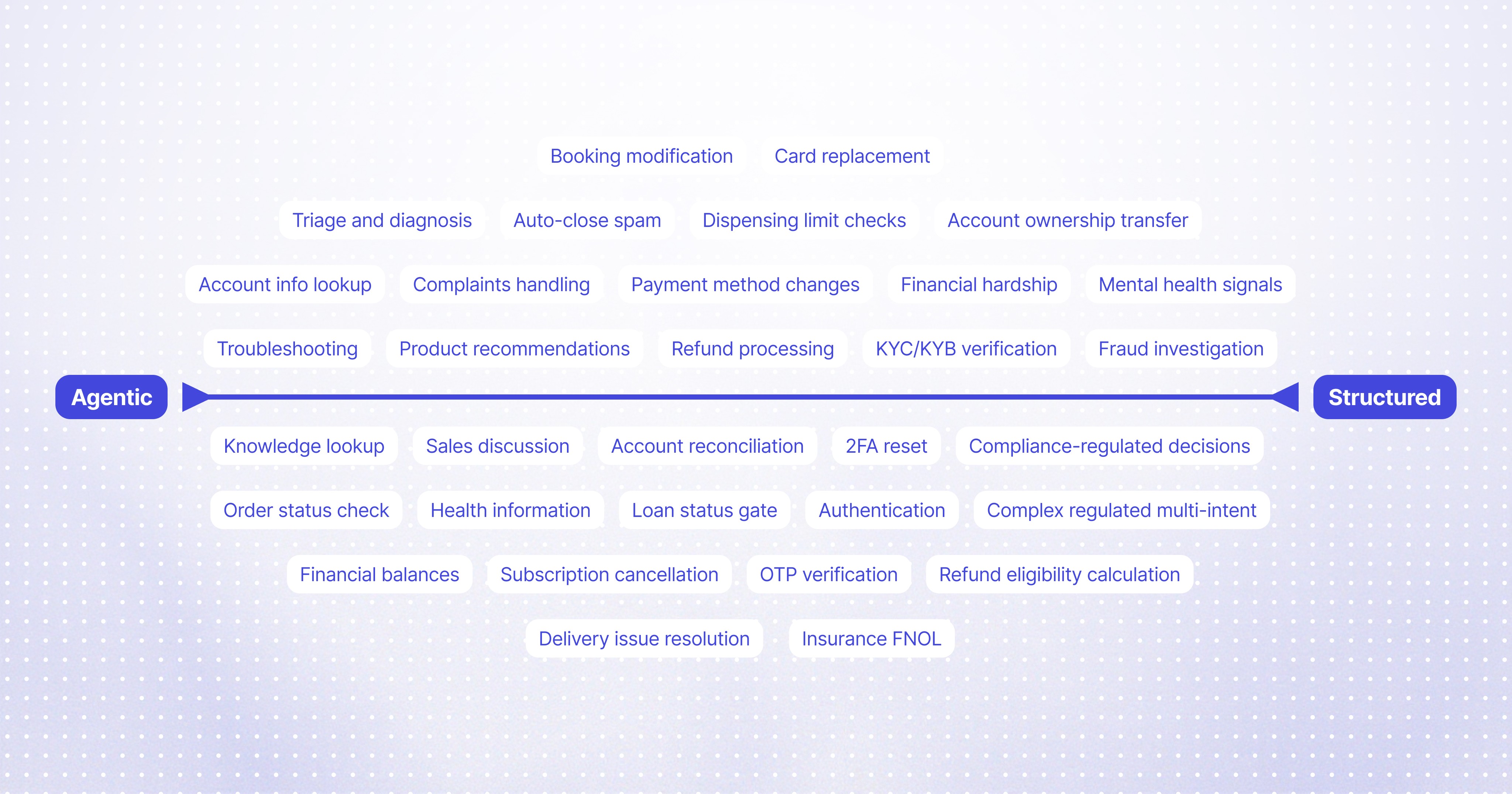

Every operation sits somewhere on a spectrum. A knowledge lookup can be fully agentic. A refund needs a conversational wrapper with deterministic execution inside. A compliance-critical flow might be almost entirely structured. You configure this per operation based on consequences.

Why this matters

Most AI architectures put safety at the edges. Guardrails watch outputs and intervene when something looks wrong. The problem is that the agent has already decided to do something wrong by the time the guardrail catches it. You’re in damage control.

Pockets of determinism puts safety inside the operations themselves. The tool validates preconditions before executing. You’re not catching mistakes. You’re making them impossible to execute.

There’s a security benefit too. When the agent calls a sub-workflow, that workflow runs in isolation. The agent doesn’t see internal state or sensitive data. If your verification flow uses a one-time code, the agent never has access to it. It just gets back “verified” or “not verified.” You can’t leak what you don’t have.

The result

Flex, a buy-now-pay-later platform, went from 0% automation to 85%+ on complex support workflows in under a year. Breeze, a fintech handling transfer disputes and purchase credits, grew from 50% to 82% automation over nine months. These aren’t FAQ deflection numbers. They’re full resolution rates on multi-step, API-heavy, compliance-sensitive workflows.

Magic Eden runs both Lorikeet and Intercom’s Fin on different ticket segments. The CSAT comparison is stark: Lorikeet tickets score double what Fin tickets score, in the same customer base, side by side.

Pockets of Determinism is how we architect the agent. For how we validate and improve accuracy in production, see Defence in Depth.

FAQ

How do you prevent an AI agent from executing sensitive operations at the wrong time?

By validating preconditions inside the operation itself, not at the agent level. The agent can request a refund whenever it wants, but the refund tool checks whether all required steps have been completed before executing. If the customer hasn’t confirmed, or terms haven’t been disclosed, the tool rejects the request and tells the agent what’s missing.

Can AI agents access customer data they don’t need?

Not if the architecture isolates sub-workflows. When an agent calls a verification flow, that flow runs in its own context. The agent receives the result (“verified” or “not verified”) but never sees internal state like one-time codes or sensitive account details. You can’t leak what you don’t have access to.

What happens if an AI agent hallucinates that conditions are met?

The tool catches it. Deterministic operations validate their own preconditions against actual system state, not against what the agent claims. If the agent says “customer confirmed” but no confirmation exists in the conversation log, the tool knows and rejects the request.

How do you balance conversational AI with compliance requirements?

By choosing the right mix per operation. Knowledge lookups can be fully agentic. Refunds need a conversational wrapper with deterministic execution inside. Compliance-critical flows might be almost entirely structured. You configure this based on consequences: low stakes get flexibility, high stakes get structure.

What’s the difference between guardrails and pockets of determinism?

Guardrails watch agent outputs and intervene when something looks wrong. By then, the agent has already decided to do something wrong. Pockets of determinism put validation inside the operation, so mistakes can’t execute in the first place. It’s the difference between catching errors and preventing them.

Book a call

See what Lorikeet is capable of